MLOps Playbook: Deploying Machine Learning Models Safely at Scale

- all

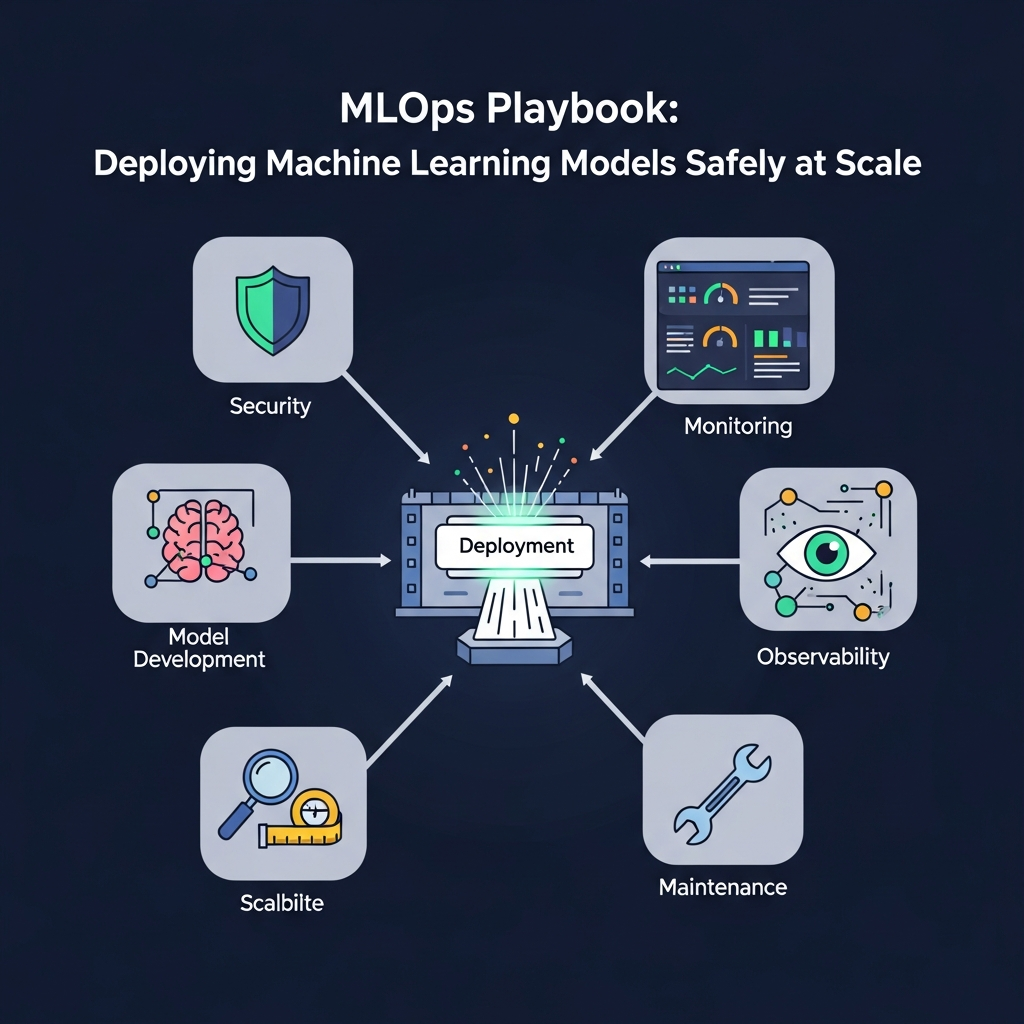

MLOps playbook: Deploying Machine Learning Models Safely at Scale

Introduction: Why an MLOps Playbook?

Across product teams, machine learning models evolve from exploratory prototypes to mission-critical components. An MLOps playbook codifies the practices, ceremonies, and tooling that reduce risk while preserving velocity. The goal is to create reliable, auditable, and scalable ML systems that can adapt to changing data and business needs.

This guide focuses on practical steps you can take today: robust CI/CD for ML, observability that reveals real-time health, proactive data drift detection, and governance mechanisms that scale with your organization. You’ll find concrete patterns, checklists, and comparisons to help you choose the right approaches for your context.

Define Production Goals and Governance

The first step is to translate business objectives into production ML goals. Align model performance targets with user impact, risk tolerance, and regulatory constraints. Define service level objectives (SLOs) for latency, accuracy, and reliability, and establish who is accountable for model outcomes at every stage of the lifecycle.

Governance must cover data usage, privacy, versioning, and change management. Create a lightweight catalog of approved data sources, features, and model types. Document escalation paths for incidents and clear criteria for rolling back or retraining a model when thresholds are breached.

- Examples of production goals: maintain 95th percentile latency under 200 ms for online inference; sustain model accuracy within a predefined margin over a rolling 30-day window.

- Governance artifacts: data lineage diagrams, feature catalogs, model registries, and audit logs.

Build a Data Foundation for Production ML

Strong data is the backbone of dependable ML. Start with a data strategy that emphasizes quality, provenance, and governance. Implement a feature store to standardize feature definitions, ensure consistency across models, and simplify reusability.

Data quality checks should run at ingestion and prior to model training. Consider automated data profiling, schema validation, and anomaly detection to catch irregularities early. Establish data drift monitoring as a default, with clear thresholds that trigger automated or manual responses.

Practical tip: separate transient training data from production data and maintain a reproducible, versioned data pipeline. This separation reduces the risk of leakage and helps you reproduce results across environments.

ML CI/CD Pipelines: Reproducibility at Scale

Apply software engineering discipline to ML workflows. Treat datasets, feature engineering code, model training, and evaluation metrics as first-class artifacts in your CI/CD pipeline. Use version control for data and models, with immutable artifacts and automated testing at every stage.

Key components include:

- Training pipelines that are reproducible from raw data to the final model, with deterministic seeds and logged hyperparameters.

- Model evaluation gates that compare current candidates against a baseline using statistically sound metrics.

- Artifact registries for datasets, features, models, and evaluation results with access controls and lineage tracking.

Best practice: implement continuous verification where every code change triggers a regression test suite for data and model performance, plus a blue/green or canary deployment option for models in production.

Model Deployment: Safe, Repeatable Rollouts

Deployment strategies should minimize risk while enabling rapid iteration. Canary and blue/green deployments let you evaluate performance on a small subset of traffic before a full rollout. Automated rollback mechanisms ensure you can revert quickly if a new model underperforms or behaves unexpectedly.

Environment parity is essential. Maintain consistent configurations across training, staging, and production, and use infrastructure as code to reproduce environments. Feature toggles allow product teams to decouple release timing from model deployment, giving product leaders control over when a model becomes active for users.

Common pitfalls include cascading failures due to data/schema drift after deployment and underestimating rollback complexity. Plan for both data and model rollback, and verify that rollback paths preserve user experience during a transition.

Model Monitoring and Observability

Production ML isn’t “set it and forget it.” Continuous monitoring surfaces disturbances in data, model behavior, and system health. Instrument models with signals that reflect accuracy, latency, resource usage, and input data quality. Build dashboards that highlight trend shifts, alert on anomalies, and support root-cause analysis.

Three layers of observability are recommended:

- Operational: latency, throughput, CPU/GPU usage, memory, and error rates.

- Model: prediction latency, confidence scores, and output distribution drift.

- Data: input feature distributions, data quality metrics, and data source health.

Tip: pair real-time alerts with periodic health reviews in which data scientists and engineers jointly investigate anomalies and decide on corrective actions (retraining, feature changes, or data pipeline fixes).

Data Drift Detection and Quality Assurance

Data drift occurs when the statistical properties of input data shift over time, potentially degrading model performance. Establish automatic drift detection with configurable thresholds, and tie drift signals to concrete actions such as retraining or feature engineering adjustments.

Quality assurance for data should include checks on completeness, consistency, and provenance. Maintain data lineage to understand how each feature is produced and why a model was trained on a given dataset.

Best practice: implement automatic retraining triggers when drift crosses a defined threshold, but require human validation for significant drift that could impact business risk or user safety.

Model Governance Framework

A governance framework ensures accountability, compliance, and risk management as the ML program scales. Create a cross-functional governance board that includes product, data science, security, legal, and ethics representatives. Define decision rights for model approvals, updates, and retirements.

Key components include model registries with versioning, audit trails for data and feature usage, and policies for responsible AI (transparency, fairness, and safety). Establish criteria for model retirement and a clean handoff to production operations when a model is no longer suitable for use.

Operational Runbooks and Incident Response

Runbooks document standard operating procedures for common ML incidents: degraded accuracy, data source outages, feature store failures, or model drift events. Well-defined steps reduce mean time to detection and mean time to recovery.

Include runbooks for anomaly triage, rollback procedures, and communications plans for stakeholders. Regular tabletop exercises strengthen preparedness and reveal gaps in tooling or governance before real incidents occur.

Scaling Your MLOps Practice

As models proliferate, you’ll encounter fragmentation unless you invest in scalable patterns. Favor modular architectures, standardized interfaces, and reusable components across teams. A centralized model registry, shared feature store, and common CI/CD pipelines help teams avoid duplication and friction.

Consider lifecycle-aware teams with clear ownership: data engineers for data pipelines, ML engineers for model development, and platform engineers for infrastructure. An offshore or hybrid delivery model can accelerate scaling if governance and communication are robust.

Implementation Checklist: 90-Day Plan

Use this pragmatic checklist to bootstrap an MLOps program. Customize it to your context, but aim to complete each phase within a quarter:

- Define production goals and governance artifacts (data lineage, model registry, SLOs).

- Establish a data foundation: data sources, feature store, data quality gates, drift monitoring.

- Implement ML CI/CD pipelines with versioned artifacts and automated testing.

- Set up deployment strategies (canary, blue/green) and rollback procedures.

- Launch observability dashboards covering operations, model health, and data checks.

- Document governance policies and form a cross-functional governance board.

- Develop runbooks for incident response and conduct tabletop exercises.

- Scale gradually: standardize components and train teams on governance and tooling.

Conclusion: Continuous Improvement in Production ML

A successful MLOps playbook is not a one-off project; it is a living system that evolves with data, models, and business needs. By aligning production goals with governance, investing in solid data foundations, and engineering reliable CI/CD and observability, you reduce risk while unlocking faster, more dependable ML delivery.

Use the patterns outlined here as a baseline, then tailor them to your organization’s risk appetite and regulatory requirements. With disciplined processes and collaborative governance, your ML initiatives can scale with confidence and deliver measurable business value.